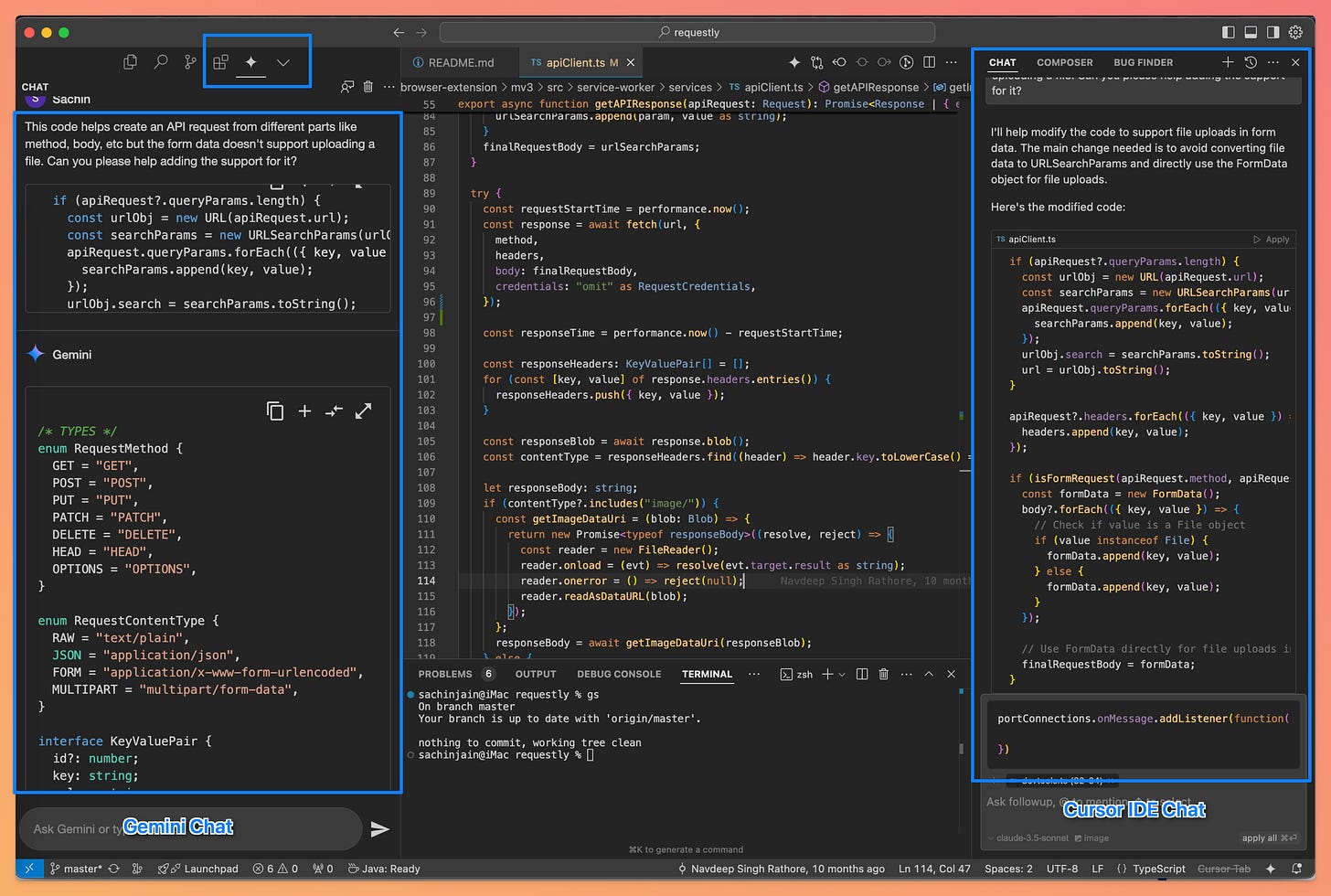

AI Coding Assistant - Gemini Code Assist vs Cursor IDE Comparison

Google gifts a Free* AI Coding Assistant to the developer community having better code completion limits and ~4x token context. This article compares Gemini and Cursor - UX, accuracy, pricing, etc.

Introduction

Google recently launched “Gemini Code Assist for Individuals, " further sparking the AI coding assistant war. What makes it more interesting is that Gemini Code Assist includes 180,000 code completion limits—nearly 90 times more than the 2,000 provided by competitors like GitHub Copilot or Cursor AI. Its 128,000 token context is also four times larger than other tools’ capabilities.

Having worked with Cursor AI for a while, primarily for exploratory coding and basic development, I gave Gemini Code Assist a quick try. This article is an outcome of the comparison.

Preface

I have used the AI coding assistants on the Open-Source Requestly Github repo.

Requestly is an open-source alternative to Postman and Charles Proxy. I have used prompts on apiClient.ts, which is primarily responsible for composing an HTTP request by different parts like URL, HTTP Method, Request Body, URL Params, etc. Find the complete source code here

User Experience

Chat-Window Position

By default, the Cursor AI Chat Window is on the right, whereas the Gemini Chat Window is on the left. Since Gemini Code Assist is installed as a VSCode extension, it is included in the primary toolbar along with other extensions.

When Cursor Chat is opened on the right and in a sticky position, it doesn’t impact navigation between files, searching the code, etc. You can continue to code and use Cursor Chat together.

Since Gemini Assist is on the left, it conflicts with the File Navigation panel and other options in the primary toolbar, and you have to make multiple switches between Files and Coding Assistant to use them together.

This might be a little subjective, but I like the Cursor Chat experience much better than Gemini Assist in terms of how you interact with the AI Chat while writing and understanding some code.

This is a minor thing, but I do like Cursor’s font size and font style much better than Gemini’s styling.

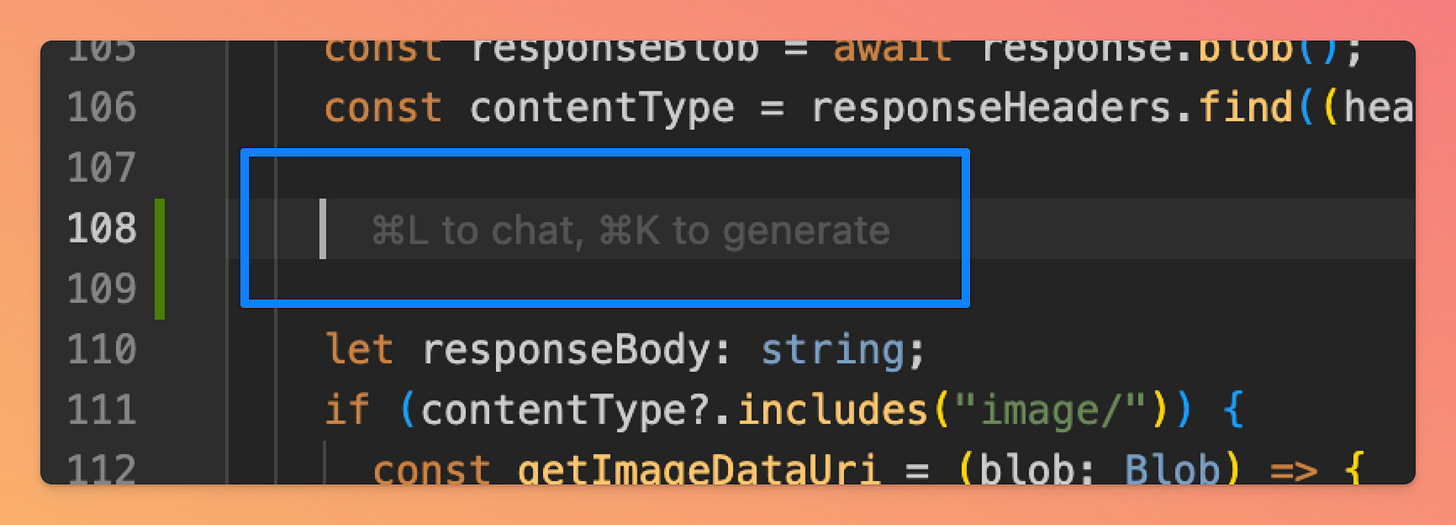

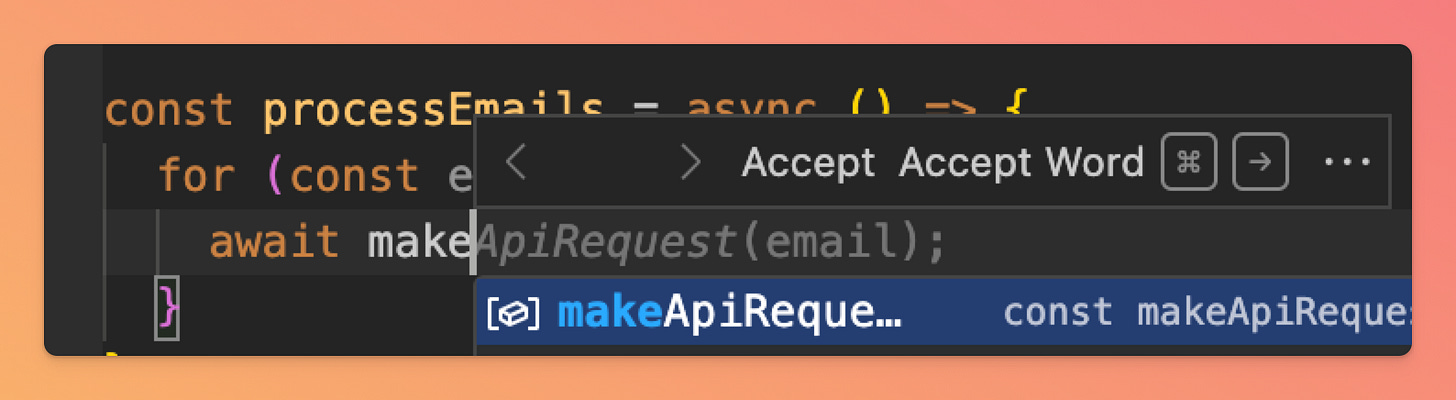

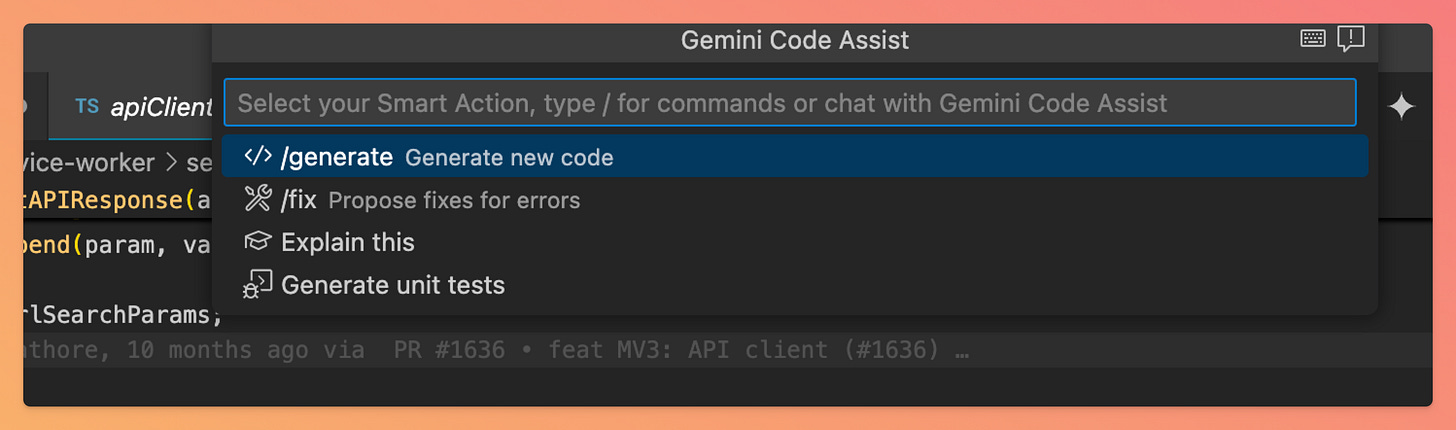

Code Autocomplete Experience

Cursor offers in-place code editing, generation, and an option to trigger chat.

You can use cmd+L to chat, cmd+K to generate a code, and select a piece of code to refactor it in Cursor IDE.

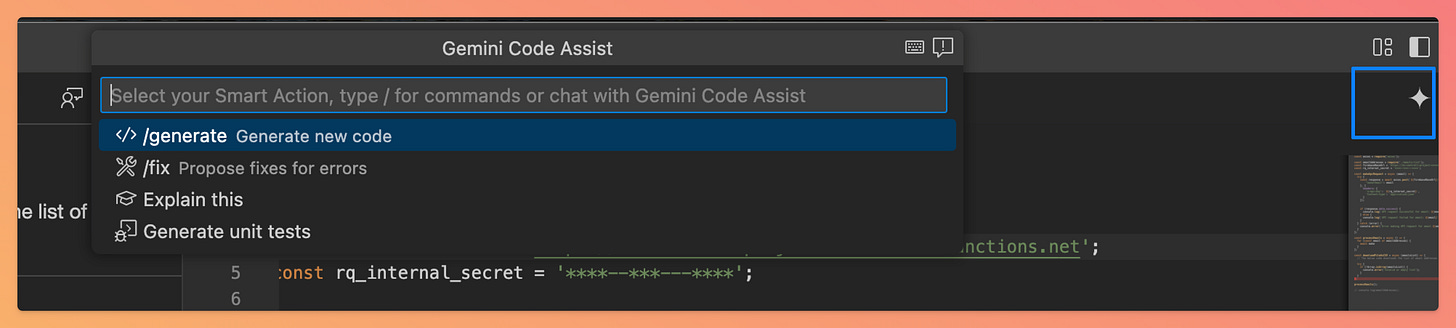

Gemini shows a little toolbar with two options

Use the Tab key to accept the complete suggestion

Use the cmd + right arrow key to accept a word

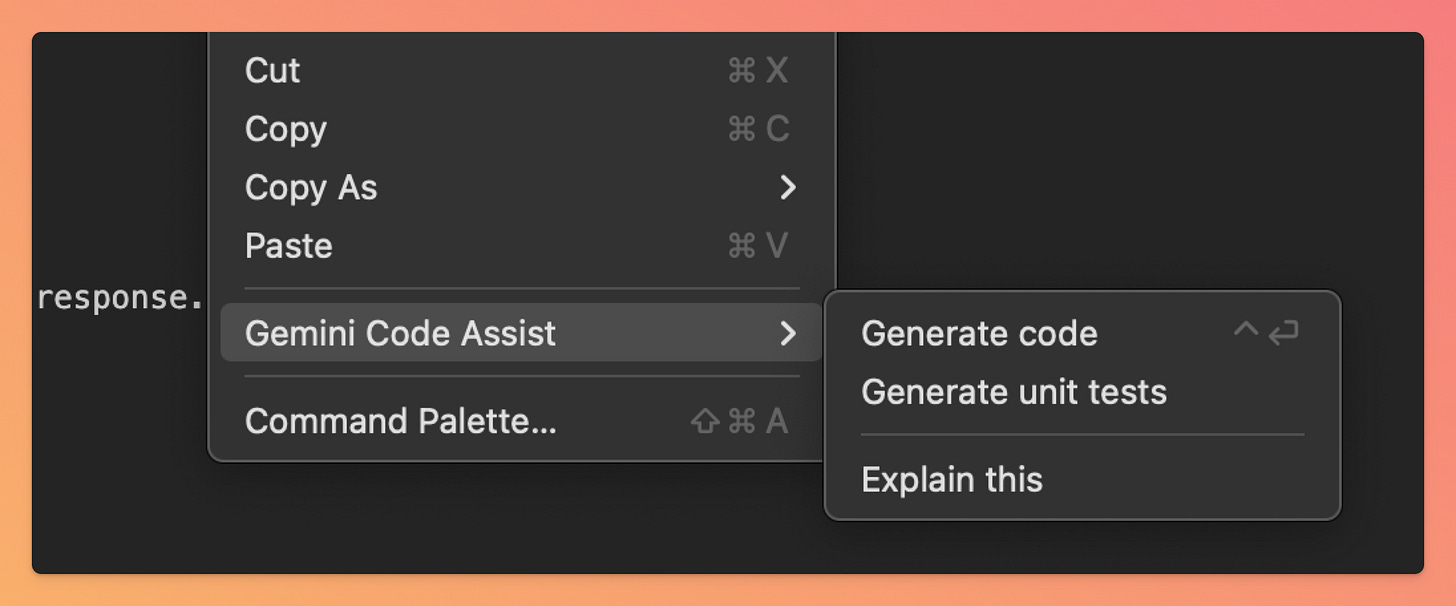

For the new code generation, Gemini offers an icon/button on the top toolbar, or you can do a right click and select Gemini Code Assist

Technically, Gemini provides similar capabilities, but again, I believe (again, this is subjective) that Cursor‘s code generation experience is more integrated with the codebase.

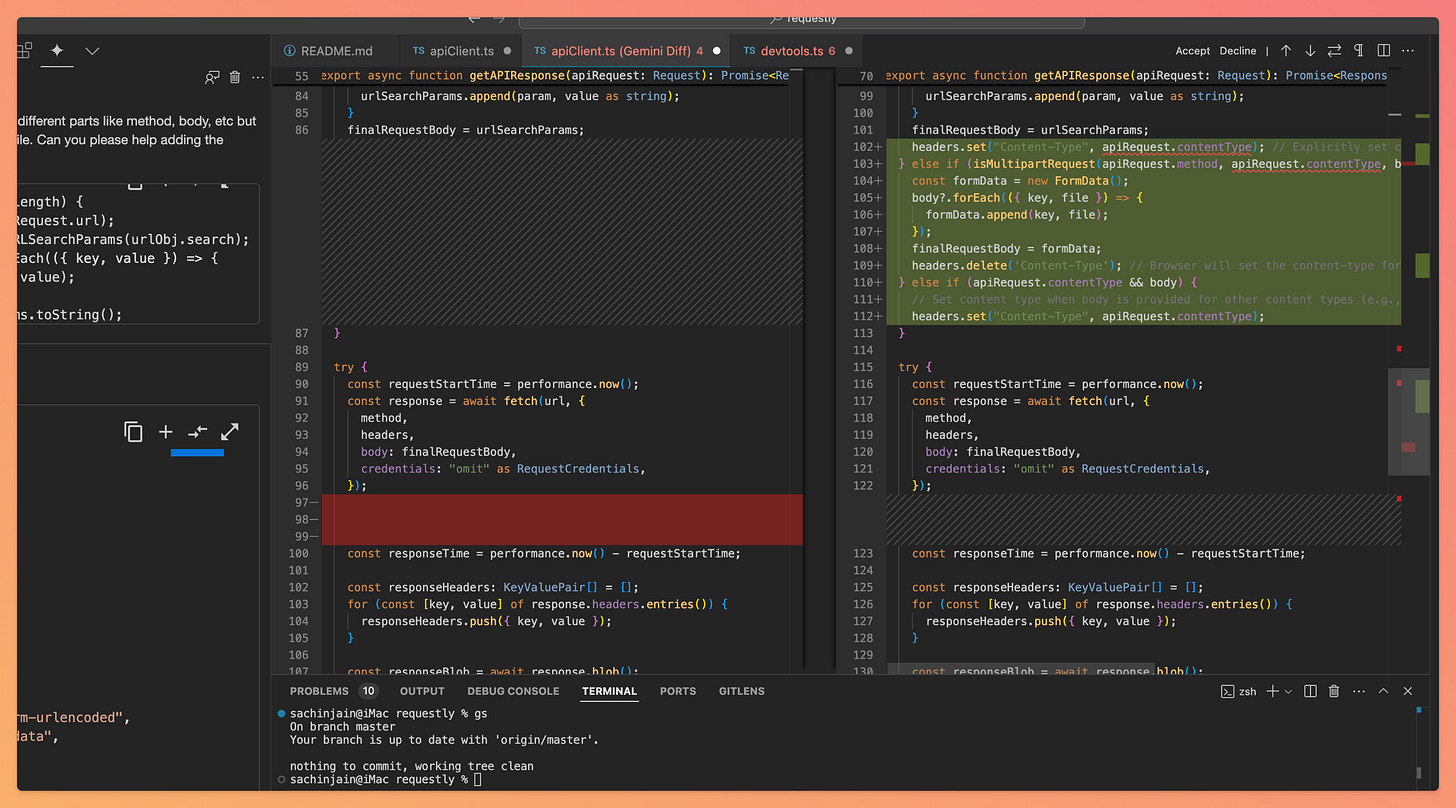

Code Diff

This is one of the things I really really like about the Gemini Code Assist. When you ask the Gemini Code Assist to generate/refactor any piece of code, you can diff the generated code with any opened file in the IDE.

You can review & comparing the AI-generated code before applying the generated code in Gemeni Code Assist.Troubleshooting using error screenshots

Gemini doesn’t support uploading images, while Cursor supports uploading Images and allows you to ask questions based on the uploaded image.

This is helpful in troubleshooting issues. You can upload a screenshot of an exception from Chrome Devtools or browser or any code build/release related issues.

For example, I’ve used Cursor to give a screenshot of an error from a web application and ask the code assistant to help me understand why this could be happening.

I haven’t used it, but I guess you can also upload an image from Figma and ask Cursor to write equivalent React and CSS code.

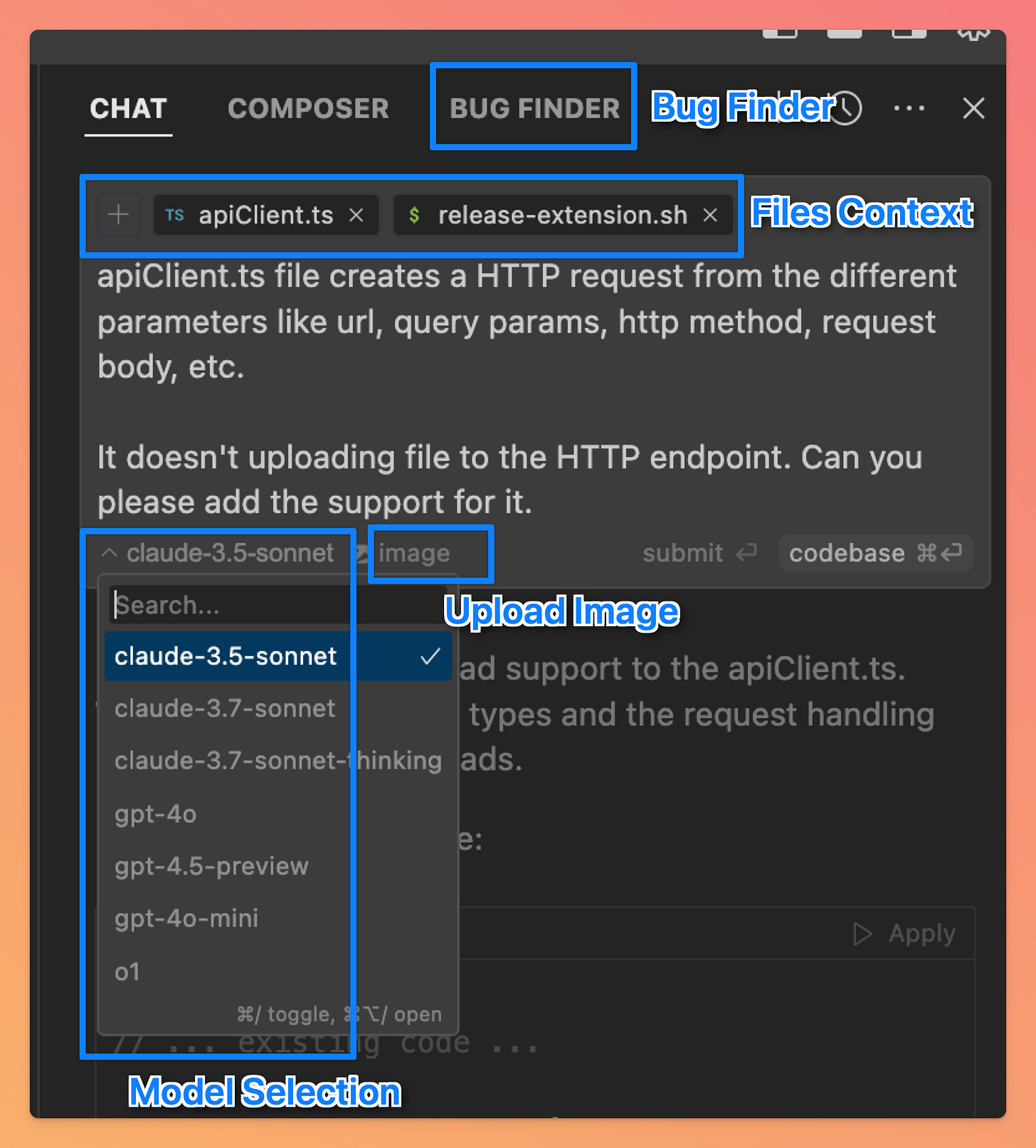

Additional Features in Cursor - File Context, Bug Finder, Model Selection, and Image Upload

When Gemini generates a new code, it automatically picks context from multiple files. In the generated output, Gemini mentions the list of files used as the source for context.

As a user, you can’t control/restrict the scope of file sources to be used, while Cursor allows you to control or limit the files used as the source of context.

In addition to the file context, Cursor also has some additional options

Model Switcher - You can choose between various Claude and OpenAI (GPT O series) models

Bug Finder - This can be used to find bugs in specific files. Just love this feature.

Image Upload - As mentioned in the previous section, you can upload the image of your UI (this can help in building a screen from a screenshot) or troubleshoot an error from the exception trace screenshot.

Additional Features in Gemini - Unit Tests Generation and Github PR Reviews

Gemini supports generating Unit Tests - I couldn’t use it in my current code base because the unit tests setup isn’t there, but this feature is visible (& hopefully useful in many other applications) in the top toolbar and right-click menu.

Gemini also offers a different service called Gemini Code Assist for Github for reviewing Pull Requests on Github. I will be trying that out soon and updating this article.

Code-generation Accuracy

I’ve a mixed bag of experience while using both the AI assistants. After trying multiple situations, I was able to find situations where Cursor didn’t give the correct output (buggy code) and a case where Gemini wasn’t producing the expected result.

Case when Cursor generated buggy code

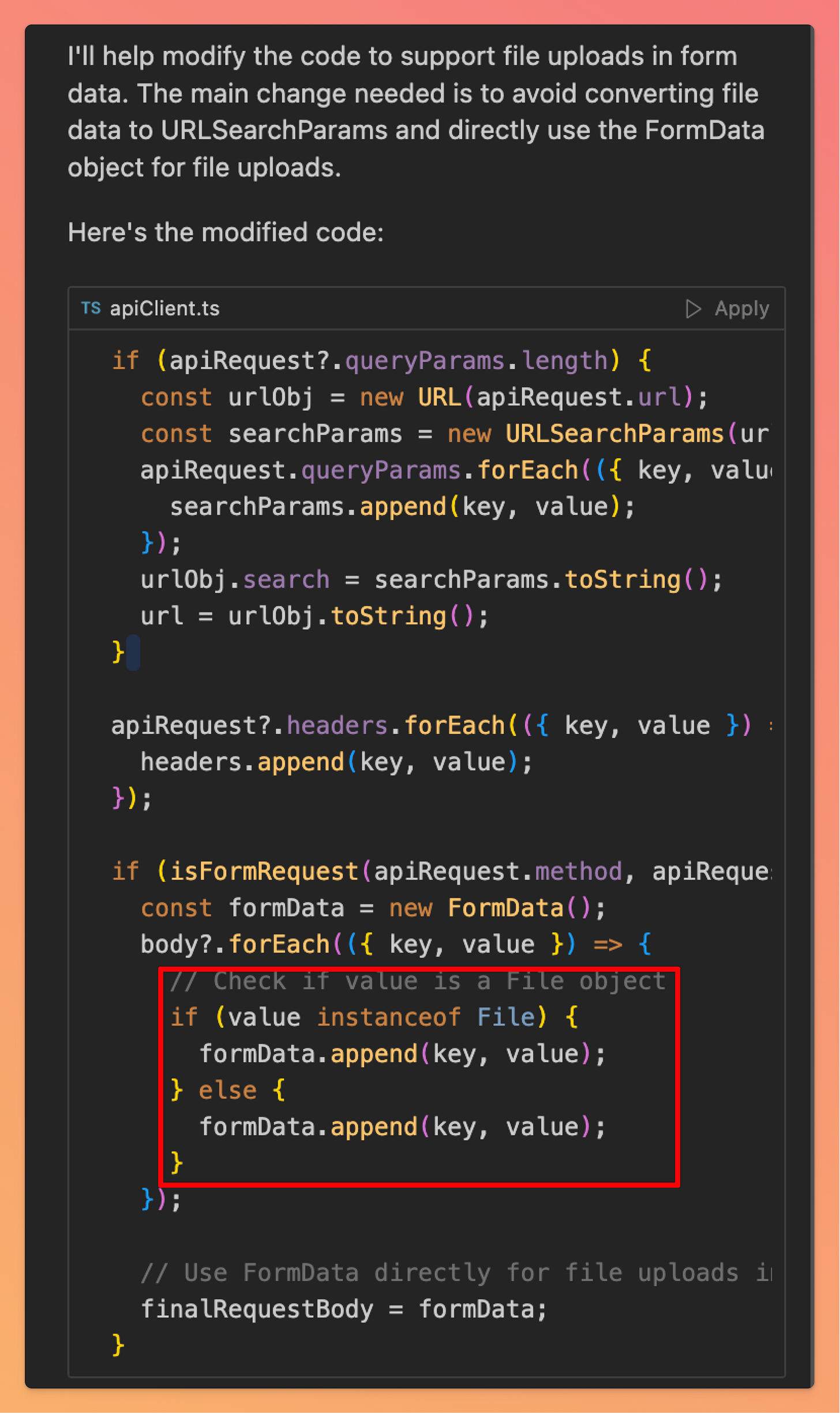

I asked Cursor to modify the apiClient.ts file to add support for uploading files in the HTTP request. Look at the generated piece of code, especially the if/else block, which is identical and doesn’t make sense.

Case when Gemini produced results outside the context of prompt

I asked both the AI assistants to generate a simple JS function to test whether a number is prime or not.

Cursor was able to provide the desired output, while Gemini’s output was more towards what should come at that line instead of the given prompt.

Check this 50s video 👇

You must review and verify the AI-generated code before using the same.

Conclusion

Thank you for reading so far. Overall, I am very satisfied and excited to use the Gemini Code Assist further and update this article as I find more things worth sharing. I am using Cursor and Gemini together, which makes it easier to find more such comparisons. I would love your input on this.

References

https://blog.google/technology/developers/gemini-code-assist-free/

https://techcrunch.com/2025/02/25/google-launches-a-free-ai-coding-assistant-with-very-high-usage-caps/

https://github.com/requestly/requestly

The comparison between Gemini Code Assist and Cursor really resonates with my own journey through AI dev tools. What strikes me most is your point about the diff feature in Gemini - that side-by-side review capability is underrated. When you're working with AI-generated code at scale, the ability to actually see what's changing before accepting it becomes critical, not just convenient.

I've been building an AI agent called Wiz using Claude Code, and one pattern I've noticed is that the "best" tool often depends heavily on the type of task. For quick autocomplete and contextual suggestions during active coding, tools like Cursor shine. But for larger refactoring or when you need the AI to understand broader system architecture, the context window size you mentioned becomes the limiting factor. That 1 million token window in Gemini is genuinely useful when you're working across multiple files that need to stay consistent.

Your observation about both tools producing buggy code is spot-on and something I wish more people talked about honestly. I've found that the real skill isn't picking the "right" AI coding tool - it's developing workflows that assume AI suggestions need verification. The tools that make code review frictionless end up winning, regardless of which underlying model they use.

I wrote about my own experience testing various AI dev tools including Cursor in a similar comparison piece: https://thoughts.jock.pl/p/cursor-vs-google-ai-studio-antigravity-ide-comparison-2025 - curious if you've tried any multi-model workflows where you use different tools for different phases of development.